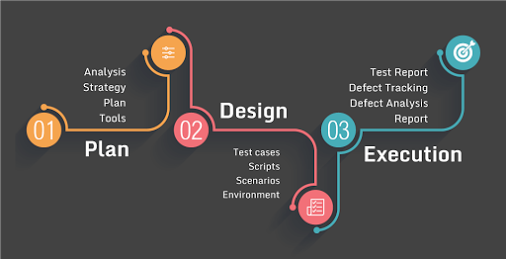

The software testing process

The testing process is developed in various phases, each of which is associated with a rich production and consultation of various types of documentation.

Some of these documents are sometimes drafted, for the will of those involved in testing, so that the testing can take place in a formal and rigorous way, but in most cases it is essential and necessary for a successful process.

Test planning and control

The software testing process is started with the test activity planning phase, which is usually oriented to the production of a document called “Test Plan”.

The test plan usually covers all types of testing that you intend to develop for the application.

This document provides a description and the type of application, the functionalities and modules to be tested and those for which it is not envisaged, the types of testing to be performed, the order of implementation and the priority level of the tests, the test environments and the hardware and software resources necessary for their realization.

The planning phase begins with the collection of application requirements and project specifications, useful for determining the general approach to testing and the necessary resources, such as, in the case of unit tests, the adoption of particular frameworks or other software.

We then come to produce what is commonly called the general schedule, or a list of testing activities in a certain temporal order and with a priority index associated with each one.

The determination of the schedule considers the characteristics to be tested and the related types of associated testing, such as: user acceptance tests, safety tests, stress tests, performance tests, other system tests and integration tests.

The unit tests, in this phase, are usually presented through the division into application modules, without going into detail, but at the same time trying to define the test environments and the general characteristics of the input and output data associated with them.

While for the other types of testing, the documents from which information is extracted and processed to define the test plan are mainly functional requirements, for the unit tests the architectural specifications of the project are also widely used.

After requesting any clarifications on the requirements, we proceed to refine the information obtained from this phase of the testing process.

The acquired data can then therefore be entered in detail in the test plan (plan test).

Test Analysis and Design

This phase follows the test planning, therefore the main documents and information used come from the test plan, but also from the definition of the requirements and the formal application specifications.

The purpose of the design is to acquire all the data necessary to proceed with the implementation of the tests.

To do this it is possible to proceed in two ways: to expand the existing test plan, or to produce other documents related to it.

These documents, whether in the case of deciding to keep them separate or integrating them into the test plan, are: test specifications, test case specifications and execution procedures.

The specifics of the tests are based on a further division into groups of the testing activities already planned in the schedule of the test plan.

Each grouping indicates a typology or a particular test operated, and the test environment is described and a list of test cases belonging to it, complete with general attributes and motivation.

The specification of a test case, on the other hand, is a document that identifies a particular test configuration, describing the inputs entered, the expected results, the execution conditions, the hardware and software environment.

Finally, a document relating to the execution procedures may be associated with a test specification or one or more test cases, and describes the steps to be taken to execute them.

In this type of documents it can be specified how to start the test procedure, how to carry out measurements and measurements, the unforeseen events that may occur during the execution and how to behave if they occur.

Test Implementation

The set of documents described above constitutes the necessary input for the test implementation phase, which will lead to the actual realization of what emerged during the design phase. Indeed, in this phase, individual test cases are evaluated and implemented as required in the specifications.

Usually, to do this, procedures are created that can automatically perform the tests, so that they can be run whenever the need arises.

These procedures must verify the presence of conditions that are able to successfully pass the test or its failure, based on the comparison between the expected result for the test case and the value actually obtained by the application.

The introduction of automatic procedures also allows a high degree of reusability of the tests, which, following any change in the product, will only have to be modified marginally.

This is the case, for example, of regression tests.

The implementation also takes into account the documents related to the previously defined execution procedures, which indicate the way in which the tests must be performed.

Such documents can obviously be modified during this phase, in the event that complications arise in the implementation of the procedures or when more appropriate solutions are identified.

At the end of the implementation, the individual tests carried out are often inserted into one or more “test suites”, or test collections.

A test suite is a structure capable of launching the execution of all the tests it contains, without having to recall them one by one.

Assigning test groups homogeneous to the same test suite also offers the possibility of structuring and ordering tests more efficiently.

Once the test suites have been implemented, this phase can be considered complete, but at the end of it the technical documentation of the realized code is usually generated.

This document, commonly called “API specifications” (Application Programming Interface), presents the set of procedures created by the developer through an abstraction.

This type of documentation is particularly important to understand the functioning and structure of test cases, to understand any errors and exceptions raised during their execution, to analyze the results obtained and in the debugging process.

In fact, at the end of the implementation phase the tests will be performed, evaluated and analyzed in detail.

Test execution and evaluation

At the end of the implementation and after setting up the necessary configurations and test environments, the tests can finally be performed.

Usually it is sufficient to execute one or more test suites containing all the tests implemented for the application.

Each test case launched can lead to three results: overcoming, failure, or an error during the execution of the test.

When all the conditions specified for passing a test case indicate that exactly the expected results have been obtained for each input provided, the test will be successfully passed.

When, on the other hand, one or more conditions identify values obtained that are different from those expected, the test detects a failure and indicates that there is probably a defect in the code, which is appropriately reported.

Regardless of the results obtained from the evaluation of the conditions up to that moment, when an unexpected event occurs in the test object or in the test itself, an unhandled exception is reported and the test returns error as a result.

The execution of the tests generates in the first place the so-called “Log”, or a structured collection of all the details concerning the execution of the tests in chronological order, such as: the name of the test performed, the execution time, date and start time and the result obtained.

Then by studying the test log and crossing the results obtained with the completeness and termination requirements, defined in the planning and design phase, it is possible to perform the “test check”.

This verification operation wants to establish if all the features have been tested and if all the tests are sufficient to cover the entire code and the different input classes previously identified.

In general, the check makes an estimate of the degree to which the tests are exhaustive with respect to the objectives set in the test plan.

In the event that the lack of test or the redundancy of some of them is found, any changes are made to the set of existing tests.

Once the complete set of tests has been performed for the application considered, the results obtained are then evaluated.

The first thing to do is to save the test logs performed in special repositories or databases.

The persistent memorization of such information is important to see the evolution of a product and it is fundamental in the evaluation of different versions in the regression tests.

Secondly it is necessary to carry out an initial analysis of the defects found in the application, which usually occurs during the creation of the “Test Summary Report”, that is a set of assessments with respect to the failures or errors found in the tests, which need further investigation.

For unit tests this document usually constitutes the input for the application’s debugging process and contains the log of the tests that highlight defects or have gone wrong, of the possible causes or solutions and the limits found in the tests.

After the debugging process, the execution and evaluation of the tests can be repeated several times, to ascertain the correct functioning of the units tested.